last weekend i took my first look at generative AI and have since been inspired. in this journey one of my new favorite technologies is Stable Diffusion. To not get to deep it enables generation of art very similar to DALL-E and the like.

after playing around with a handful of tools built that allowed me to experiment. experiment i did. since then i’ve actually generated thousands of examples, but when i tried to draw Raekwon from Wu Tang Clan, i found that like many others, stable-diffusion didn’t really know Raekwon enough to produce anything decent.

i saw this as opportunity to learn about training custom models based on existing models. in this case it’s stable-diffusion-1.5 (checkpoint).. the goal is to train the model to draw Raekwon.

12+ hours later and i’m still waiting for the training to finish.. i provided 30 example images and started a training, instructing it to create 10x the number of sample images (300) for the training data.

the process is brutally slow even on my M1Max but i’m very impressed so far.

i created this video to show the frames so far (84%) into a video and decided to post it here.

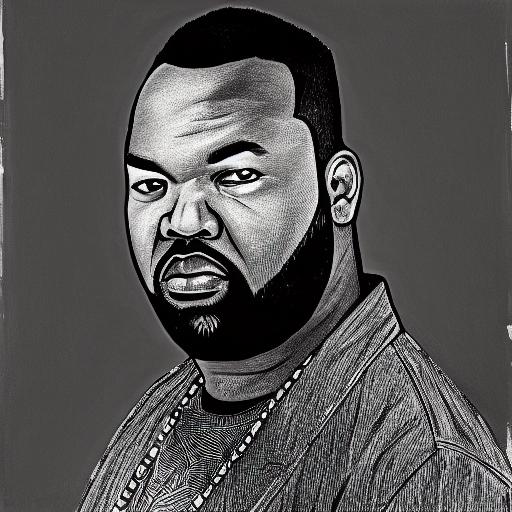

drawings generated during training process

i still think it has a way to go but it’s interesting to watch it work..

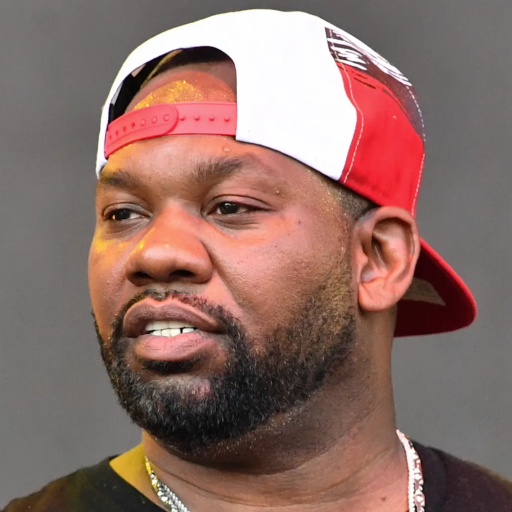

here’s one of the better results, but still not quite right

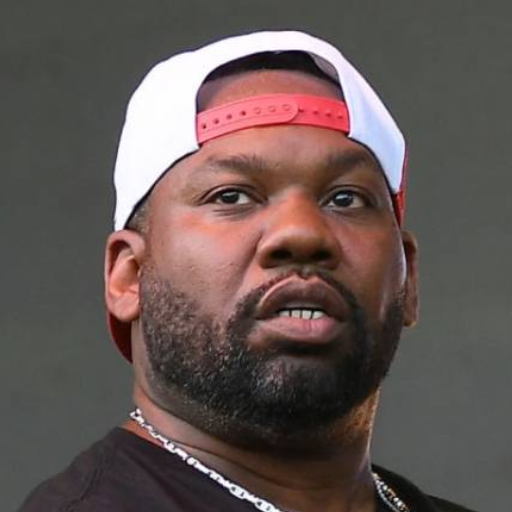

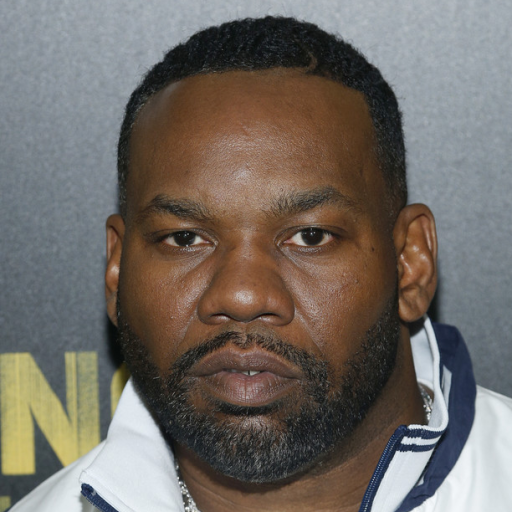

a meh example

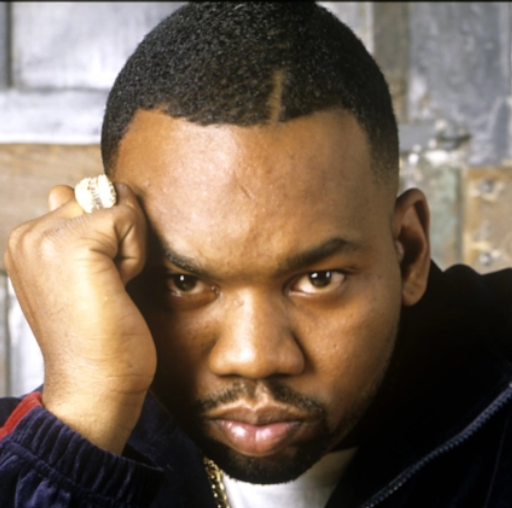

a meh example

update: ok.. the first phase seems to be complete. we have 300 new (class) images, but now it’s running the actual training which will require 2500 steps.. as stated, i’m figuring this out and learning as i go, but was very happy to see the web ui was able reload the params for training.

while that continues over next 3 hours or more (we’ll see) i’m posting the training images i provided as a source using Dreambooth via Stable Diffusion Web UI.

here’s the complete set of class images that were generated during the first step of this process.

more updates to come..